TL;DR. Most AI agent risks at a small business have nothing to do with the model. They are deployment-design failures that show up in week three: the agent freezes on out-of-script questions, the cold-list reactivation breaches TCPA or GDPR, voice-minute and token costs bleed margin once volume spikes, sales reps quietly stop sending calls through the scoring system, and the contract assumed zero human review when reality is four-to-eight hours per week. Five walls, all preventable with a Day-0 design review. Pattern documented from devil's-advocate reviews on 52 agency offers between March and May 2026. The fix for each wall is a specific design choice — explicit escalation rules, jurisdiction-segmented consent logs, usage caps with variable-cost pass-through, rep-first feedback delivery, and a priced human-review allowance.

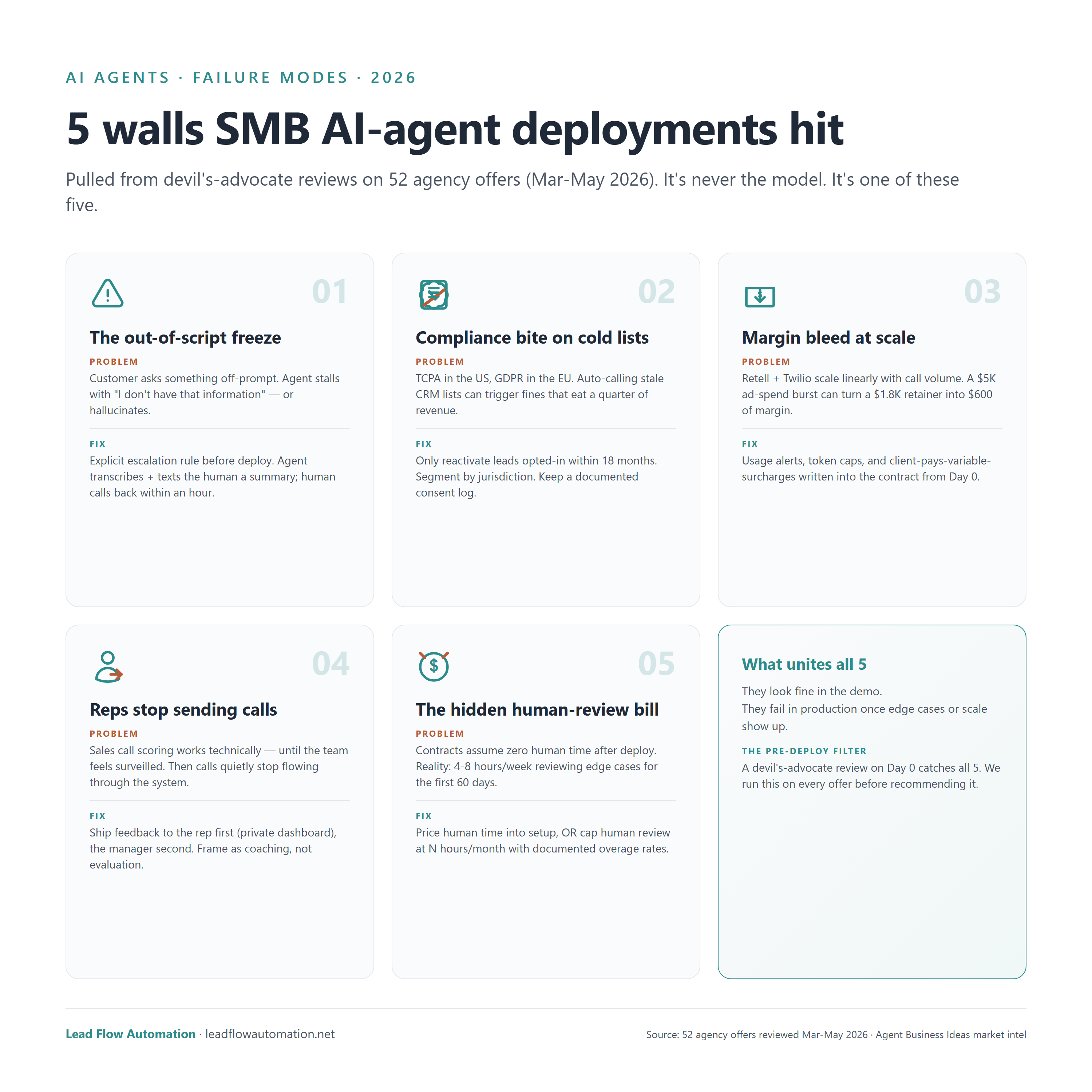

Across 52 documented agency offers for SMB AI-agent deployments reviewed March–May 2026, the pattern is consistent: the demos all look fine. The failures happen in production once edge cases or real volume show up. Below are the five specific walls — and the design choice that prevents each one. None of them are model-quality issues. All of them are decisions a buyer should pin down before wiring a setup fee.

What are AI agent risks for a small business?

AI agent risks for a small business are the failure modes that show up after deployment — not in the sales demo. The dominant security-and-malware framing in technical press is relevant for enterprise IT, but it is not what kills a typical SMB deployment. The walls that actually break SMB agents are operational: scope creep beyond the prompt, regulatory exposure on outbound contact, runaway infrastructure cost, behavioral pushback from the team using the agent, and an undercosted maintenance burden.

The risks are not theoretical. They show up in week three of production, after the buyer has paid setup and is now watching the first real edge cases hit the agent. The good news: each wall has a specific, repeatable design fix that a deployment-ready vendor will have already built in.

The 5 walls that break SMB AI-agent deployments

A devil's-advocate review on Day 0 catches every one. Each is a design decision, not a model limitation.

1. The out-of-script freeze

A customer asks something the prompt did not anticipate — a refund question on a sales agent, a parts-availability check on a receptionist, a multi-step troubleshooting case on an intake agent. The agent either says "I don't have that information" (best case) or hallucinates a confident wrong answer (worst case). Either response costs the business the call and erodes trust in the system within days.

The fix: Design an explicit escalation rule before deployment. The agent should detect a confidence threshold or an unrecognized intent, transcribe the conversation, and text or email a human a summary with a one-tap callback link. The customer hears "let me have someone get back to you within the hour" — which is a better outcome than the demo case where the agent improvises.

Common stack: Retell AI or Vapi for the voice layer; n8n or Make for the escalation routing; a Slack or Telegram bot for the human-side notification.

2. Compliance bite on cold lists

This wall specifically hits lead-reactivation and outbound-call agents. The US Telephone Consumer Protection Act (TCPA) and the EU General Data Protection Regulation (GDPR) both restrict cold outreach to phone and SMS contacts. Auto-dialing a stale CRM list with an AI agent is functionally the same as auto-dialing it manually — the regulations do not distinguish — and the penalty schedule can eat an entire quarter of agency revenue in a single enforcement action.

The fix: Only reactivate leads who opted in within the last 18 months. Segment the list by jurisdiction so EU contacts run through GDPR-compliant consent gating and US contacts go through TCPA-compliant time-of-day and quiet-hours filters. Keep a documented consent log accessible to compliance counsel. The agency builds this into the agent's contact-selection logic, not as an afterthought.

A vendor who waves off the compliance question, or treats it as the client's problem, has not built a deployment-ready product.

3. Margin bleed when volume spikes

This is the wall that surprises agencies after they ship. Voice-agent infrastructure — Retell, Vapi, Twilio — charges per minute. Token usage on the underlying LLM scales linearly with conversation length. A flat monthly retainer that looks profitable at 200 calls per month becomes a loss leader the month the client runs a paid-ad burst and drives ten times the volume into the agent.

The dollar math: A $1,800/month retainer is comfortable at 200 calls × 4 minutes × $0.08 voice-minute cost = $64 of voice + ~$40 of tokens. Multiply the call volume by ten during an ad campaign and the same retainer covers $640 of voice + $400 of tokens + $600 of operator time on overage tickets. The agency is now subsidizing the client.

The fix: Usage alerts at 50%, 75%, and 90% of an agreed monthly minute or token budget. Variable-cost pass-through clauses in the contract — the client pays anything above the included tier. A hard cap on outbound voice minutes per day to prevent a runaway loop from billing $3,000 overnight. None of these are exotic; all of them belong in the master service agreement before the first call goes live.

4. Reps quietly stop sending calls

This wall is unique to per-call sales-coaching agents. The system works technically: the agent transcribes the call, scores the rep on a defined rubric, and emails the feedback. But two weeks in, the manager notices call volume into the scoring system is down 60%. The reps have not been told to stop using it. They have just quietly stopped uploading recordings.

The reason is behavioral. If the rep's manager sees the score before the rep does, the system feels like surveillance. Once a sales team perceives an internal tool as surveillance, the tool is dead — they will find workarounds, route their best calls through a personal phone, or simply not log the worst ones.

The fix: Send the feedback to the rep first, the manager second. Give the rep a private dashboard they can review for a week before scores roll up to management. Frame the product as coaching ("here are three things to try on your next call") not as evaluation ("you scored 6/10"). Once reps see their own private dashboard and recognize it is genuinely helping them close more deals, the social dynamics flip and the system becomes sticky.

5. The hidden "human review" bill

Most SMB AI-agent contracts assume that after deployment, the agency monitors passively and the client benefits autonomously. The reality is that the first 60 days require four-to-eight hours per week of human review of edge cases, prompt tuning, transcript audits, and escalation-rule refinements. That is real labor the agency either prices in or eats.

The contracts that fail to price this in turn the deployment into a margin-negative project by week six. The agency burns operator time on review without invoicing for it, the client never sees that work happening, and renewal conversations get awkward because the relationship has been quietly eroding.

The fix: Price the first 60 days of human review into the setup fee, or build an explicit human-review allowance into the retainer (e.g., "4 hours/month included; overage at $150/hour"). Either approach makes the labor visible and aligns incentives. The buyer is not surprised by an invoice; the agency is not subsidizing the deployment out of margin.

What unites all five walls

The five risks share three structural traits:

- They look fine in the demo. Every one of these failures is invisible during a sales walkthrough. The demo runs on prepared inputs; the failures hit on unprepared edge cases at real volume.

- They are design decisions, not model limitations. Switching to a smarter model does not solve any of the five. Better prompting does not solve them either. They are solved by changing the deployment architecture — escalation rules, compliance gating, usage caps, feedback routing, review pricing.

- A Day-0 devil's-advocate review catches every one. The pattern is consistent enough across the 52 reviewed offers that a checklist of five questions, asked before signing, will surface the design gap in every case.

That checklist is the next section.

How to evaluate an AI agent vendor on deployment risk

Five questions a non-technical SMB founder should ask any AI agent vendor before wiring the setup fee. A vendor who hand-waves any of the five is not deployment-ready.

- What is the escalation rule when the agent encounters an out-of-script question? The answer should name the trigger (low confidence score, unrecognized intent, explicit customer request), the action (transcribe and notify), and the human SLA (callback within X hours). "The model is smart enough" is not an answer.

- For outbound-contact agents — how do you handle TCPA and GDPR? The vendor should describe consent-log retention, jurisdictional segmentation, opt-in recency rules, and time-of-day filters without prompting.

- What happens to my retainer if call volume doubles next month? A deployment-ready vendor has usage tiers, alerts, and a contractual variable-cost pass-through. An unprepared vendor says "we'll handle it" and then renegotiates uncomfortably in week six.

- How does the agent's feedback reach my sales team? For coaching agents specifically — the answer should be rep-first, manager-second, with a private dashboard. Any other answer predicts the calls-stop-flowing failure.

- How many hours of human review are priced into the first 60 days? The honest answer is between 30 and 60 hours total. A vendor quoting zero is either inexperienced or planning to eat the cost (which means they will lose money on your deployment and the relationship will end badly).

Frequently asked questions

What is the failure rate for SMB AI agent deployments?

Industry convention across 52 documented agency offers reviewed March–May 2026 suggests most SMB AI-agent deployments either fail outright or significantly underperform expectations within the first 30 days. The failures are concentrated in five specific design areas — out-of-script handling, compliance, cost scaling, behavioral adoption, and undercosted human review — not in the underlying AI model.

Are AI agent risks the same as AI security risks?

No. AI security risks — prompt injection, data exfiltration, model jailbreaks — are real and dominate the enterprise IT conversation. AI agent risks for a small business are operational: deployment-design failures that surface in production. An SMB AI-agent deployment is far more likely to fail because of an unhandled TCPA scenario or runaway voice-minute billing than because of a security exploit.

How much does the human-review burden of an AI agent actually cost?

Industry convention for the first 60 days of an SMB AI-agent deployment is 4–8 hours per week of human review — prompt tuning, edge-case audits, escalation-rule refinement. At a typical agency hourly rate of $100–$200, that is roughly $1,600–$6,400 of labor in the first two months. Vendors who do not price this in are either inexperienced or will renegotiate when the bill comes due.

Can an SMB run an AI agent without a developer on staff?

Yes, provided the agency handles deployment, monitoring, and prompt updates. The owner approves edge cases through a Slack or Telegram bot. The risk shifts from 'do we have a developer?' to 'does our vendor have a documented escalation, compliance, and review process?' — which is the right question.

What is the most common AI agent failure mode at small businesses?

The out-of-script freeze. A customer asks something not in the prompt, the agent either deflects or hallucinates, and the customer's confidence in the system collapses within days. The fix is an explicit escalation rule built into the agent before launch, not a smarter model.

Do AI agent risks apply to text-based or chat-only agents the same way as voice agents?

Partially. Out-of-script freeze, compliance exposure on outbound contact, hidden human review, and behavioral pushback all apply to text agents. The margin-bleed wall is less acute because text infrastructure costs less than voice infrastructure — but token costs still scale linearly with volume, so the underlying risk pattern is the same.

How long should an SMB AI agent be shadow-monitored before going fully autonomous?

Industry convention is 1–2 weeks of monitored shadow operation, followed by 60 days of weekly review at 4–8 hours per week. Going fully autonomous on day one is the single most common cause of the out-of-script freeze becoming a public incident.

Sources and methodology

- TCPA (US Telephone Consumer Protection Act, 47 U.S.C. § 227) and GDPR (EU General Data Protection Regulation, Regulation 2016/679) — cited as the regulatory floors for cold-list reactivation agents.

- Retell AI, Vapi, and Twilio public pricing — referenced for the voice-minute and per-call cost scaling discussion.

- 52 agency offers analyzed from public Upwork postings and agency landing pages, March–May 2026 — internal market-intelligence database (Lead Flow Automation Agent Business Ideas pool).

- Devil's-advocate reviews on agency offers including AI answering services, lead reactivation systems, speed-to-lead callbacks, sales coaching agents, and intake automations.

About the author

Gergely Zsigmond runs Lead Flow Automation, an AI-automation agency specializing in deployment-ready agent systems for service businesses. Previously built a production retrieval-augmented generation (RAG) chatbot for the engineering team of a $30B/yr multinational firm, in daily use across the organization. 10+ years in AI, 3 years dedicated to LLM-based software development. Conducts devil's-advocate reviews on every agency offer before recommending it to a buyer. Based in Budapest; serves clients in the US, EU, and APAC.

Reach the agency at leadflowautomation.net.